As organizations transition towards utilizing cloud-based analytical technologies and Artificial Intelligence (AI), collecting data efficiently through AWS data ingestion between multiple sources has increased in significance using AWS Data Ingestion Tools.

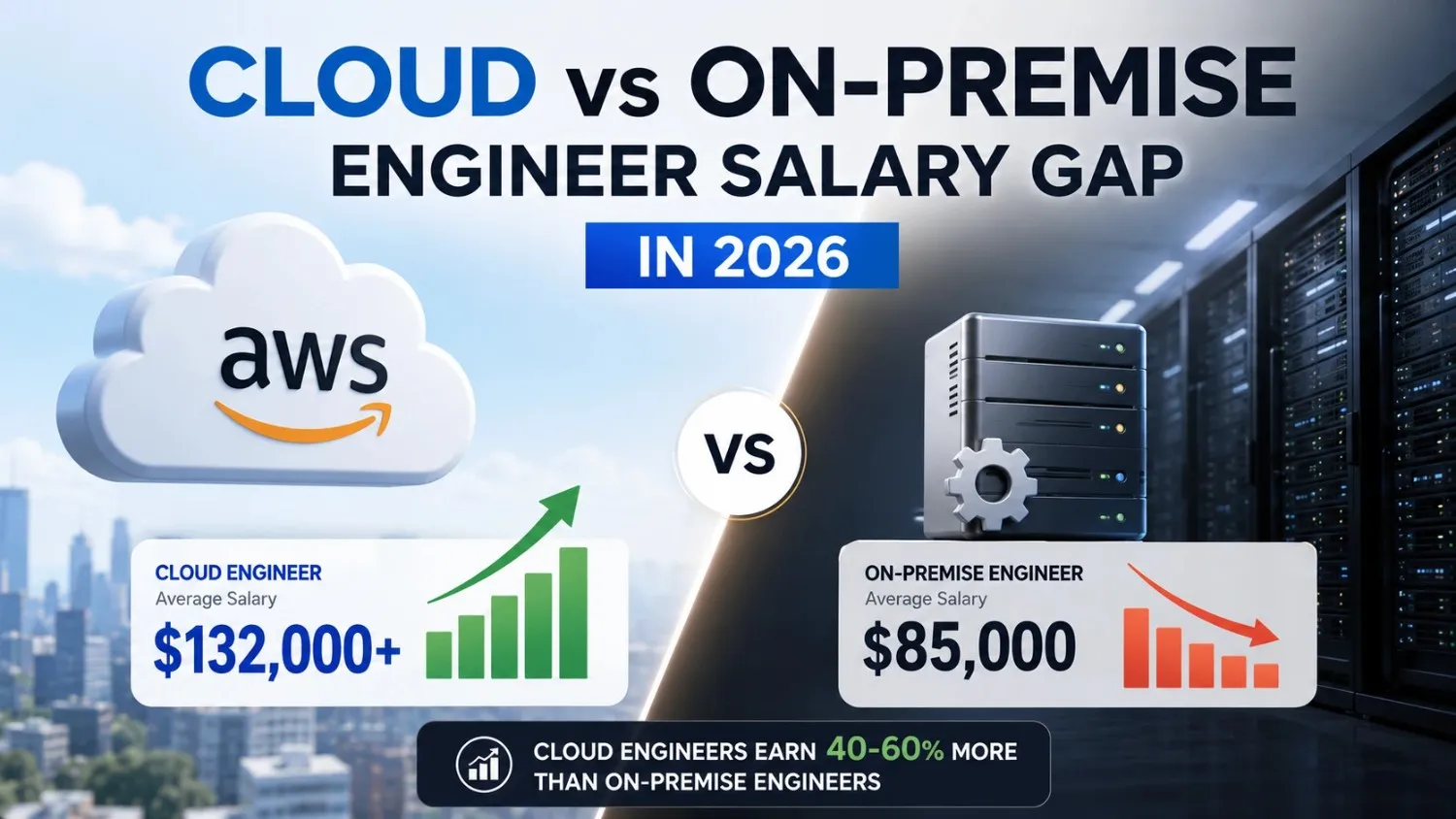

The need for AWS data ingestion to be a key core competency among cloud engineers, data engineers and DevOps professionals is no surprise that recruiters are placing greater emphasis on testing job candidates for their knowledge of AWS data ingestion architectures, storage integrations and processing workflows in AWS interview questions related to AWS Data Ingestion Tools.

In this blog we provide an overview of common AWS data ingestion interview questions, as well as provide comprehensive explanations of why you should expect to be asked these types of questions in your technical job interview process and other AWS interview questions about AWS Data Ingestion Tools.

1. What is AWS Data Ingestion?

Answer: The AWS data ingestion is the process that enables the collection and transfer of diverse data from multiple sources like on-premises and cloud-based. The ingestion serves an important role as the part of an AWS data ingestion pipeline. It allows that data to be stored and analyzed using services such as AWS S3, AWS Data Sync and AWS Kinesis.

Key aspects of AWS data ingestion include:

- Purpose: The main purpose is to aggregate data from database, APIs, logs and IOT devices into a centralized repository for analysis and processing.

- Types of Data: Supports similar data such as IOT data sensor and diverse data such as e-commerce data.

- Real-time Streaming: Amazon Kinesis Firehouse or Kinesis Data Streams are used for immediate data processing and analysis.

- Batch Transfer: AWS DataSync or AWS Snow Family are used for moving large volumes of data.

- Data Migration: AWS Database Migration Services (DMS) for moving data from on-premises databases.

- Benefits: It eliminates data silos, provides a unified view of data and enables data-driven decision-making.

2. What are the main types of AWS Data Ingestion?

Answer: There are three main types of AWS data ingestion by amazon web services are designed to provide data into services such as Amazon Simple Storage Service, Amazon Redshift and Amazon Kinesis.

The following are the types of AWS data ingestion using AWS Data Ingestion Tools:

- Streaming Ingestion: This tool collects data as soon as it is generated, such as form IOT sensors, logs or real-time monitoring. The tools used in streaming ingestion are Amazon Kinesis Data Streams, Amazon Kinesis Data Firehouse and Amazon Managed Streaming for Apache Kafka which are popular AWS Data Ingestion Tools.

- Batch Ingestion: This tool collects data over a certain period of time using a set schedule rather than continuously. Additional tools used in batch ingestion include AWS Glue, AWS Data Sync and the AWS Snow Family.

- Hybrid or Event-Driven AWS Data Ingestion: This method is the combination of both streaming and batch ingestion as it is a specialized form of push-based ingestion, that means it triggers data ingestion by a particular event, for example order processing.

3. Which services are used for AWS Data Ingestion?

Answer: AWS Data Ingestion offers various services that are designed for different data types and sources. These services generally land data in Amazon S3 or Amazon Redshift.

Here are the most commonly used AWS Data ingestion services categorized by AWS data ingestion use case:

- Amazon Kinesis Data Firehouse: This is the easiest way to load streaming data into AWS (S3, Redshift, OpenSearch). It mainly handles scaling, buffering and lightweight transformations automatically.

- Amazon Kinesis Data Streams: Designed for real-time and low-latency streaming applications, allowing complex processing before storage.

- Amazon Managed Streaming for Apache Kafka (MSK): It is a managed service for running Apache Kafka and ideal for real-time data pipelines.

- AWS Glue: A serverless ETL (Extract, Transform, Load) service that transforms and loads large batch data sets from various sources into data lakes.

- AWS Database Migration Service (DMS): This helps in migrating data from on-premises database to cloud database or S3 with minimal downtime.

- AWS Snow Family (Snowball Edge): Physical devices used to move petabytes of data to AWS when network bandwidth is limited.

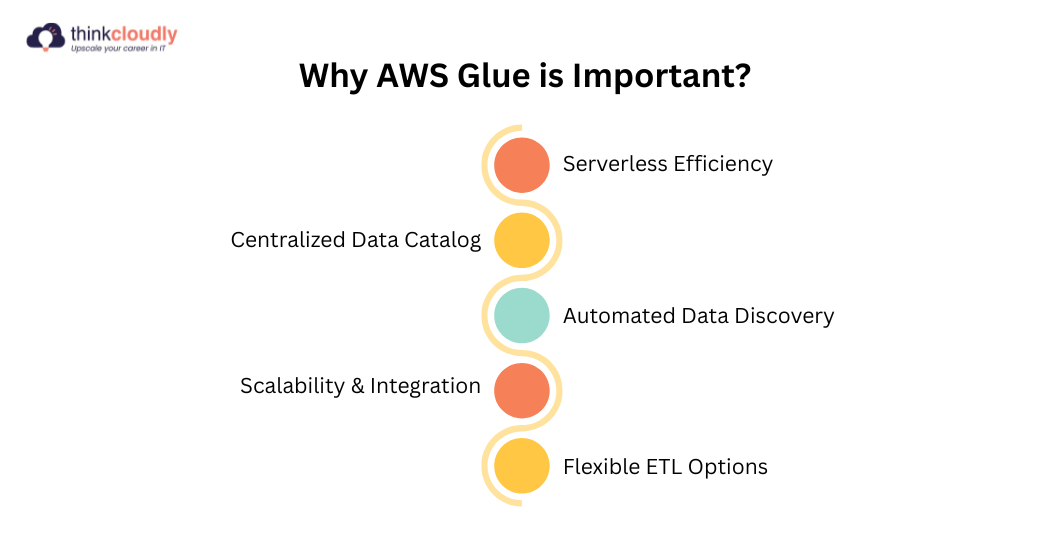

4. What is AWS Glue and why is it important?

Answer: With its automated ETL ( Extract, Transform, Load ) automation and a unified Data Catalog, AWS Glue greatly improves AWS data ingestion efficiency in business processes, thus allowing for quicker processing and thus acceleration of large data sets that allow businesses to charge less to build applications or machine learning algorithms or simply analyze large numbers of records.

5. What is Amazon Kinesis and when should it be used?

Answer: AWS Kinesis can collect data from many sources using AWS data ingestion at the same time and will process that data as it arrives (in real-time). Some examples of real-time data include Internet of Things (IOT) telemetry, website clickstreams and application logs. Using this service, you can easily change how you collect the information and analyse it in less time than traditional methods.

When to use Amazon Kinesis:

- Real-time Analytics: For creating live dashboards and detect fraud instantly.

- IOT and Sensor Data: For ingesting and processing telemetry data from connected devices.

- Log and Event Data: For collecting and analyzing logs in real-time from applications or servers.

- Data Ingestion for Data Lakes: To load streaming data into AWS services like S3, Redshift or OpenSearch.

- Live Video Processing: For ingesting and analyzing streaming video from security cameras or other sources.

6. Difference between Kinesis Data Streams and Kinesis Firehouse.

Answer: Here is the tabular representation of the difference between Kinesis Data Streams and Kinesis Firehouse in AWS Data Ingestion.

|

Feature |

Kinesis Data Streams |

Kinesis Firehouse |

|

Service Type |

Streaming data platform |

Fully managed delivery service |

|

Control Level |

Full developer control over processing |

Minimal configuration, automatic processing |

|

Data Processing |

Custom processing using applications like Lambda or consumers |

Automatic data delivery without custom coding |

|

Latency |

Very low latency (milliseconds) |

Slightly higher latency (seconds) |

|

Scaling |

Manual scaling |

Automatic scaling |

|

Data Transformation |

Requires custom consumer applications |

Built-in transformation support |

|

Data Storage Delivery |

Requires application to push data to storage |

Automatically delivers to S3, Redshift, OpenSearch, etc. |

|

Use Case |

Complex real time streaming analytics |

Simple ingestion and loading into storage or analytics systems |

|

Management Effort |

Higher operational management |

Minimal operational effort |

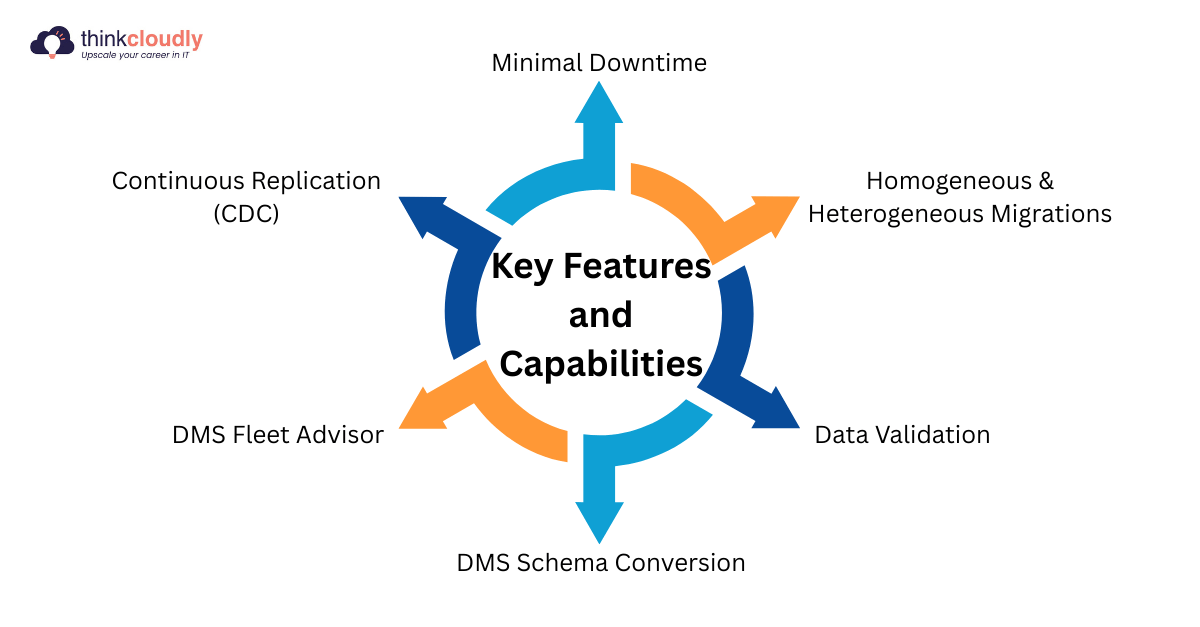

7. What is AWS Data Migration Service (DMS)?

Answer: The AWS Data Migration Service is a service that allows you to migrate your relational or NoSQL database or data warehouse into AWS, where you will be able to run it securely in the cloud. DMS provides both one-time migrations and low-latency continuous replication or your data to more than 20 types of database.

8. How do you handle AWS Data Ingestion failures?

Answer: Handling AWS data ingestion failures involves a combination of automated retries for temporary errors, utilizing dead letter queues for persistent errors and comprehensive monitoring as well as alerting to ensure data integrity and prevent data loss.

Core strategies for handling failures:

- Automated Retry Implementation: AWS services like Amazon Kinesis Data Firehouse automatically retry delivery of the same temporary problem like network timeouts or service unavailability for a configurable amount of time.

- Use of Dead Letter Queues (DLQs): Use of DLQ like an Amazon SQS queue or S3 bucket to store permanently-failed events or records for later review and manual re-processing. Failed events will have occurred because of permanent errors.

- Monitor and Alerting of Failures: Monitor key metrics like error counts and failed ingestions with Amazon CloudWatch. Set up Amazon SNS notifications or alarms to alert those concerned of the failure occurrences.

- Log Everything: Use detailed logging capabilities throughout each stage of the pipeline to isolate where and what caused the failure. In conjunction with a CLI error of debug can be used to see the underlying causes of failure.

- Data Quality and Validation: Establish the effective data validation and schema validation early on in the process in order to discover possible failure points for any downstream failures.

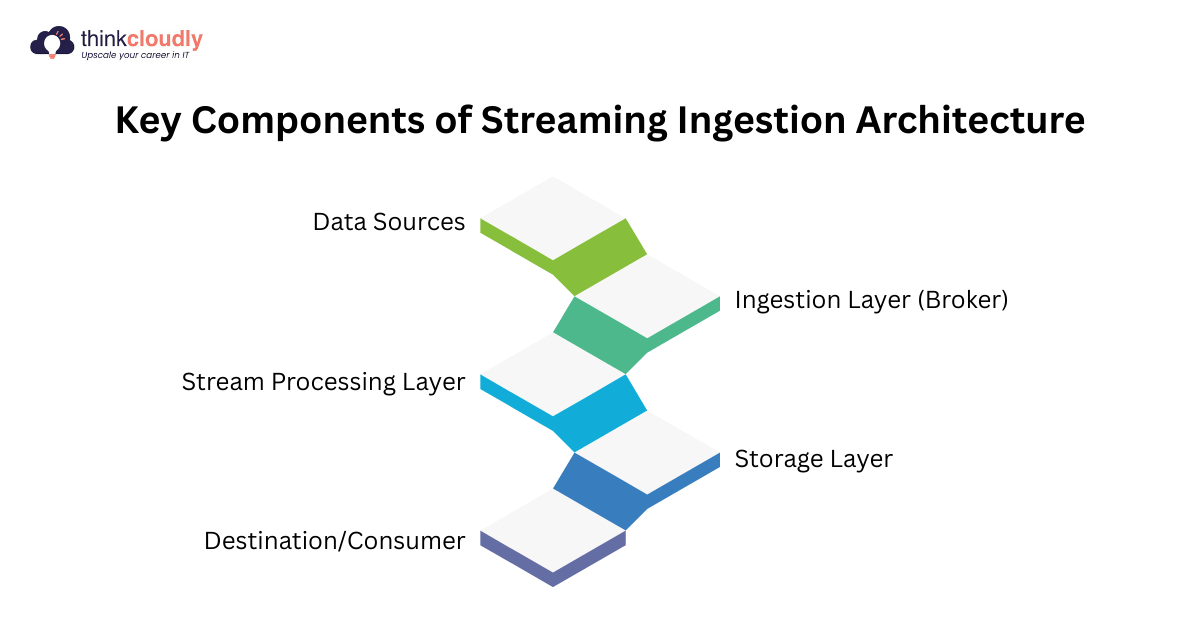

9. What is Streaming Ingestion Architecture?

Answer: The AWS data ingestion streaming architecture allows for real-time streaming as opposed to batch processing of data. This architecture is frequently used as an alternative to traditional database architectures due to its ability to provide immediate analytics and low-latency decision making.

10. How do you secure AWS data ingestion pipelines?

Answer: End-to-end encrypted with secure sockets layer (SSL) and transport layer security (TLS) protocols protecting data at rest and in-transit both by using strict authentication methods whenever pulling data from a source, along with using least privilege access control policies. Validation of the data structure should be conducted and a masking technique should be employed at the edge.

Key strategies for securing AWS data ingestion pipelines:

- Security Encryption: All data sent via internet should be encrypted using transport layer security while data at-rest should be encrypted with advanced encryption standard.

- Authentication and Authorization: All AWS data ingestion endpoints must require at least two forms of authentication such as API keys, OAuth tokens or identity access management (IAM) roles to validate where the data is coming from.

- Edge Masking or Protection of PII: As soon as the data is received, it must immediately be hashed or tokenized so that it is no longer considered as personally identifiable information.

- Network and Infrastructure Security: The use of secure application programming interface gateways and ensuring that ingestion components like servers processing incoming data are not connected from each other, would provide secure ingestion for all networks.

- Data Format or Schema Validation: To ensure that malicious data cannot pass through and create downstream failure, all data received by an ingestion component must be validated against its expected format.

Conclusion

This blog helps you in having a complete understanding of how AWS data ingestion will help you succeed during your cloud job interviews and perform well in AWS interview questions. Beyond knowing what the terms mean, you will also understand how to create proper architectural decisions based on real-world examples.

The information given is useful to be a successful engineer as businesses are looking for people who can build reliable data pipelines, provide decent stream processing and detect and recover from failures. If you can master these areas, you will be more successful in answering AWS interview questions and performing your job well in production environment